Please select your location and preferred language where available.

A New Era in AI Storage Pioneered by the Coexistence of HBM and SSDs - Unpacking Kioxia Strategy

Enabling flexible and diverse memory expansion

March 17, 2026

With the adoption of generative AI driving explosive growth in the volume of data, storage is becoming increasingly important at data centers. Kioxia is proposing high-capacity SSDs and broadband optical SSDs, 100 million IOPS SSDs, and other innovative technologies in rapid succession that leverages their next-generation BiCS FLASH™ memory technology aimed at new AI storage infrastructure.

The evolution and spread of AI technology shows no signs of slowing down. The volume of computation and data is dramatically increasing, and the calls for faster processors and higher capacity storage are greater than ever. While such calls are driving the demand for GPUs and high bandwidth memory (HBM), they are also highlighting challenges in terms of AI data center computing architecture and storage. Amidst such developments, Kioxia Corporation (Kioxia) is going on the offensive with respect to storage solutions for data centers. What kind of SSDs are needed in next-generation AI storage infrastructure? To learn more, we spoke with Masafumi Takahashi, Senior Fellow, Frontier Technology R&D Institute Research Strategy Planning Office, Kioxia, and Professor Makoto Ikeda from the Graduate School of Engineering at The University of Tokyo, who has been involved in semiconductor system design research for many years.

Growing need for higher capacity AI storage with the spread of generative AI

High-capacity and low-cost HDDs have long been used in data center storage. In particular, HDDs were often adopted for archive use in long-term data storage. However, that situation is changing due to the explosive spread of generative AI. The adoption of SSDs in AI data centers is increasing due to their superior read performance, durability and power efficiency. Professor Ikeda explains, “With the volume of data increasing at an accelerated rate, it is not a question of whether we should use HDDs or SSDs, as we are at the stage where both must be increased.” Meanwhile, AI storage infrastructure is being forced to undergo a major transformation in terms of architecture.

The limits that HBM will eventually face

The reason behind this transformation is the existence of HBM. With its extremely high bandwidth, HBM is able to maximize the performance of the GPUs that perform high-speed calculations on large amounts of data. Therefore, the goal is to increase the performance of GPUs by increasing the number and capacity of the equipped HBM. Professor Ikeda explains, “The performance of NVIDIA GPUs has increased in proportion to the increase in HBM capacity. Viewed that way, it is clear that increasing the capacity in AI processing is the right answer.”

At the same time, HBM faces significant challenges. The first challenge is cost. HBM, which stacks and integrates DRAM at high density using through-silicon via (TSV), is simply too expensive. Takahashi explains, “Without a doubt, HBM is currently the optimal solution for maximizing GPU performance. However, the high cost is the greatest bottleneck to achieving further increases in capacity. ”Other problems include heat generation from stacking the DRAM and power consumption due to the need to periodically refresh the data.

Professor Ikeda points out that the memory size is also a problem. Semiconductors generally get smaller as the process becomes more refined. However, DRAM has already stopped scaling with respect to the fabrication process. “In principle, DRAM will not function as memory unless it possesses a certain capacitance, which makes miniaturization inherently challenging,” said Professor Ikeda. Hyperscalers are also aggressively investing in server vendors, but they cannot invest infinite amounts of money. Given the cost constraints, eventually there will be a limit to the increased speed and performance of AI processing that relies solely on higher HBM capacity. As data continues to surge and tests the limits of technology, the importance of storage becomes more critical than ever.

Evolution of BiCS FLASH™ flash memory, which supports the backbone of SSDs

Kioxia presented the strategic direction of flash memory and storage that meets AI requirements in August 2025 at the US-hosted FMS: the Future of Memory and Storage conference, which attracted significant attention. The keynote speech was attended by approximately 3,000 people.

Kioxia explains that the SSD requirements differ according to the AI workflows such as training, inference, RAG (retrieval-augmented generation), grounding, etc. To satisfy each of the requirements, Kioxia will develop SSDs to achieve high capacity, broadband, and high IOPS through low latency.

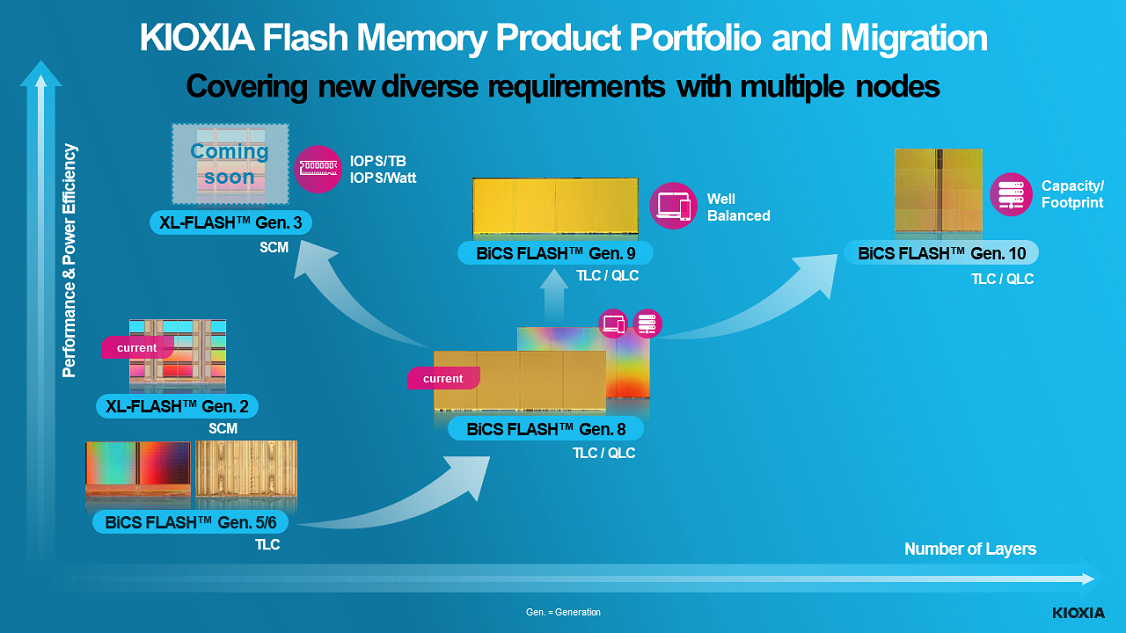

The technology that supports the backbone of such SSDs is KIOXIA BiCS FLASH™ flash memory. At FMS, Kioxia highlighted two key directions for the evolution of BiCS FLASH™ technology to meet the needs of various applications: the pursuit of cost performance and the pursuit of high capacity and high performance. BiCS FLASH™ generation 9 products will apply the stacking technology already used in the mass production of generation 8 together with the innovative CBA (CMOS directly Bonded to Array) process technology to increase performance and power efficiency while controlling costs. BiCS FLASH™ generation 10 products will apply a new stacking technology as part of a plan to achieve 332 layers of high-density stacking and a significant increase in bit density.

As for high-capacity SSDs, a 245.76 TB model was added to the KIOXIA LC9 Series currently under development. This model is designed to store the massive vector databases used in generative AI. This announcement also generated significant excitement at FMS and received a “Best of Show” award.

The company is also developing third generation XL-FLASH™ storage class memory (SCM) to support high IOPS SSDs. The latency has been reduced to 10% or less than current BiCS FLASH™ memory. One noteworthy product is the Super High IOPS SSD, which is currently being developed in cooperation with NVIDIA. Combining XL-FLASH with a new controller, it aims to achieve 10 million IOPS or more. Due to the growth of generative AI, SSDs now require IOPS that are literally an order of magnitude higher than before, reaching ten times or one hundred times higher than conventional SSDs. While Takahashi refers to this requirement as a “very high hurdle,” Kioxia plans to achieve 10 million IOPS with second generation XL-FLASH and PCIe® 6.0 in 2026, and 100 million IOPS with third generation XL-FLASH and PCIe 7.0 in 2027. Kioxia, which has been developing XL-FLASH for years, views the generative AI era as a major business opportunity for SCM. Takahashi says, “We hope to take advantage of this trend in one fell swoop.”

“Avoiding the consumption of precious DRAM” with RAG

In SSDs used for RAG and grounding, Kioxia is focusing its efforts not only on hardware such as the KIOXIA LC9 Series but also on proposals for KIOXIA AiSAQ™ (AiSAQ) software. AiSAQ is a type of software for using SSDs with RAG. RAG is a technology that increases the accuracy of generative AI responses by combining company databases and other external sources of information with large language models. RAG loads indexed data into DRAM and searches it. Using AiSAQ makes it possible to search data while it is stored on an SSD without loading it into DRAM. “RAG uses an extremely large amount of data, so we regard the use of SSDs as an effective approach and a business opportunity. DRAM has limited capacity, so the fact that using AiSAQ prevents the CPU from monopolizing the DRAM is important,” said Takahashi. Kioxia released AiSAQ as open source with the goal of increasing the importance of using SSDs with the adoption of RAG.

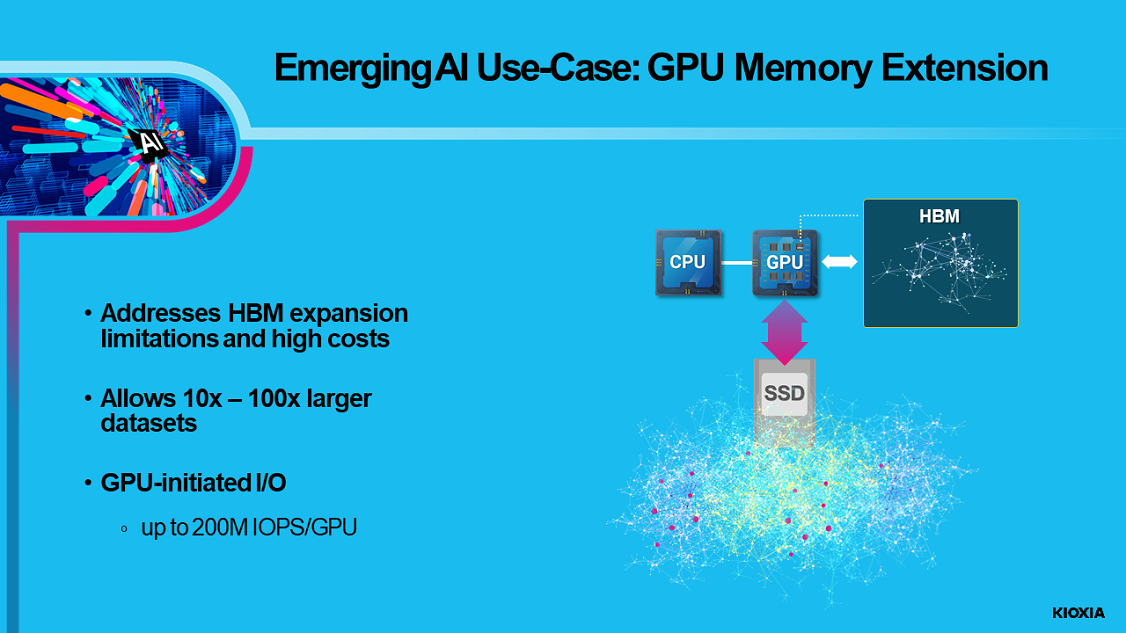

Flexible and diverse next-generation AI storage centered around SSDs

The SSD and BiCS FLASH™ technologies announced by Kioxia at FMS depict next-generation AI storage that is flexible, diverse, and easy to expand. As examples, Kioxia cites “GPU memory extension” and “near-GPU caching.” GPU memory extension addresses the HBM expansion challenge by having the SSD and GPU directly exchange data. Near-GPU caching places the SSD near the GPU and stores a certain amount of data from the data lake on the SSD. This decreases the number of times that the GPU accesses the data lake over the network, which increases data access efficiency.

Professor Ikeda says that such an architecture should be able to leverage the inherent advantages of SSDs. He explains, “I think that the GPU vendors are also considering what the next-generation GPU architecture should look like. The key to running a GPU at high speed depends on whether the necessary data arrives just in time. However, since the AI computations consist of a predetermined set of tasks to perform, if they are scheduled in advance, the SSD should be able to sufficiently compensate for any slight latency compared to DRAM. If the programmer knows in advance where the data will be accessed and can do the programming work, I think that connecting the SSD to the GPU will be much easier to use than connecting it to the CPU. This will instead maximize the benefits of SSDs which are high capacity and low power consumption.”

In the beginning, SSDs were often used as cache for HDDs in data center storage. Professor Ikeda adds, “SSDs clearly have a higher capacity than DRAM, so instead of using them as cache, which is not optimal in principle, they are being used in a straightforward manner that leverages SSD advantages to store large amounts of data.”

“It is almost impossible to think that the use of AI will disappear. Going forward, we may even see the emergence of ‘AI for using AI intelligently.’ If that happens, the power problem will become even more critical. I believe that AI storage infrastructure must continue to evolve to conserve power.”

Kioxia continues to evolve even with limits of Moore's Law

To date, semiconductors have grown according to scaling laws. The increases in DRAM capacity and the number of transistors integrated on a CPU have also been supported by scaling laws. However, growth based on such scaling laws has largely stopped in recent years. Professor Ikeda explains, “In the midst of this development, flash memory is growing. In other words, SSDs are perhaps a field that continues to evolve through scaling laws. This is certainly encouraging for me as working in the semiconductor industry.” It has long been said in the semiconductor industry that Moore's Law no longer applies. However, Professor Ikeda continues by explaining, “In terms of economic principles, it hasn't stopped yet, and the only areas showing signs of life are the number of GPU transistors and the evolution of flash memory.” Kioxia continues to evolve while supporting the latest cutting-edge trends.

Professor Ikeda emphasizes that having such flash memory and SSD manufacturers in Japan helps maintain a sound competitive environment for semiconductors, which is necessary for developing semiconductor talent and the individuals involved in digital circuit design, in particular.

“Kioxia has led the world in memory technology not only by inventing NAND flash memory in 1987, but also by introducing application of multi-level cell technology to a NAND flash memory product in 2001, announcing 3D flash memory technology in 2007, and more. Currently, memory manufacturers are competing based on high-density stacking technologies for memory cells, and there is no doubt that such innovation will be the key to meeting the massive demand for data in the future. I believe that these innovations will be very helpful for future AI evolution,” said Professor Ikeda.

AI storage infrastructure is undergoing major changes against a backdrop of explosive increases in the volume of data. Amid cost constraints on capacity expansion, Takahashi expects, “Once people recognize that introducing SSDs improves performance with minimal investment, the adoption of SSDs will likely expand further.” The high-capacity, broadband, and high IOPS storage solutions created by Kioxia are expected to elevate the importance of SSDs in data centers, open up new use cases, and support the development of the AI era.

- PCIe is a registered trademark of PCI-SIG.

- Company names, product names, and service names may be trademarks of third-party companies.

Reprinted from: EE Times Japan

Translated from the October 14, 2025 edition of EE Times Japan

This article was translated with permission from EE Times Japan.

Department names and titles are as of the time of the interview.