Please select your location and preferred language where available.

New Era of On-device AI Driven by High-speed UFS 5.0 Storage

Reprinted from: EE Times.com

Reprinted from content published in EE Times.com on February 25, 2026

This content is used with permission from EE Times Japan.

Department names and titles are as of the time of the interview.

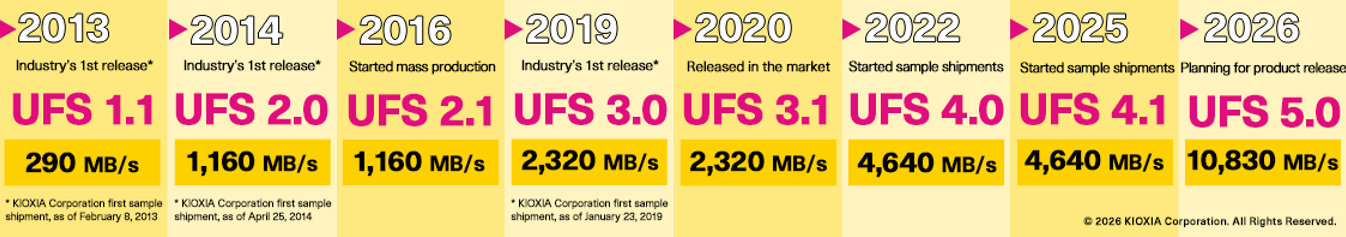

UFS 5.0, featuring a theoretical high-speed interface of 10.8 GB/s, is almost here. KIOXIA believes that the adoption of UFS 5.0 in smartphones will further advance on-device AI. This is because high-speed UFS 5.0, with high-capacity, will allow for the storage of Large Language Models (LLMs) and Retrieval-Augmented Generation (RAG) databases.

On-device AI features on smartphones are rapidly evolving

AI smartphones (smartphones equipped with AI features) are rapidly gaining popularity. Notably, high-end AI smartphones released since 2023 incorporate large language models (LLMs) and feature additional capabilities that make use of generative AI. Unlike cloud AI, on-device AI (the ability to run generative AI on devices) does not require a network connection, thus ensuring privacy while also offering highly convenient services to users, such as real-time translation.

The key to further enhancing the performance of on-device AI lies in universal flash storage (UFS), a flash storage standard designed for smartphones and other mobile devices. KIOXIA is confident that UFS 5.0, the latest UFS standard, has the potential to significantly revolutionize on-device AI.

The high-speed interface of UFS 5.0 will make LLMs smarter

UFS is currently the mainstream storage solution for smartphones. High-end models commonly use UFS 4.0/4.1, and KIOXIA is preparing to meet demand by mass-producing UFS 4.0/4.1-compliant products for smartphones.

In October 2025, the Joint Electron Device Engineering Council (JEDEC), an industry group that sets standard specifications for semiconductor products, announced that the development of UFS 5.0 specifications was nearly complete.

The most significant feature of UFS 5.0 is its theoretical transfer speed, which reaches 10.8 GB/s (10,830 MB/s) 1. This is more than double the speed of UFS 4.1 (4,640 MB/s) found in high-end smartphones, allowing for dramatically faster data transfer. Takumi Watanabe, Memory Technical Marketing Managing Department, Memory Division at KIOXIA, explains how the proliferation of AI has drastically increased demand for faster UFS interfaces. “Until now, the development of standards has led the way with transfer speeds doubling roughly every four years. However, in recent years, with the evolution of on-device AI technology, smartphone manufacturers have been steadily demanding faster speeds,” he adds.

Such remarkably faster transfer speeds have the potential to contribute significantly to the advancement of on-device AI functionality because it enables smartphones to incorporate larger LLMs.

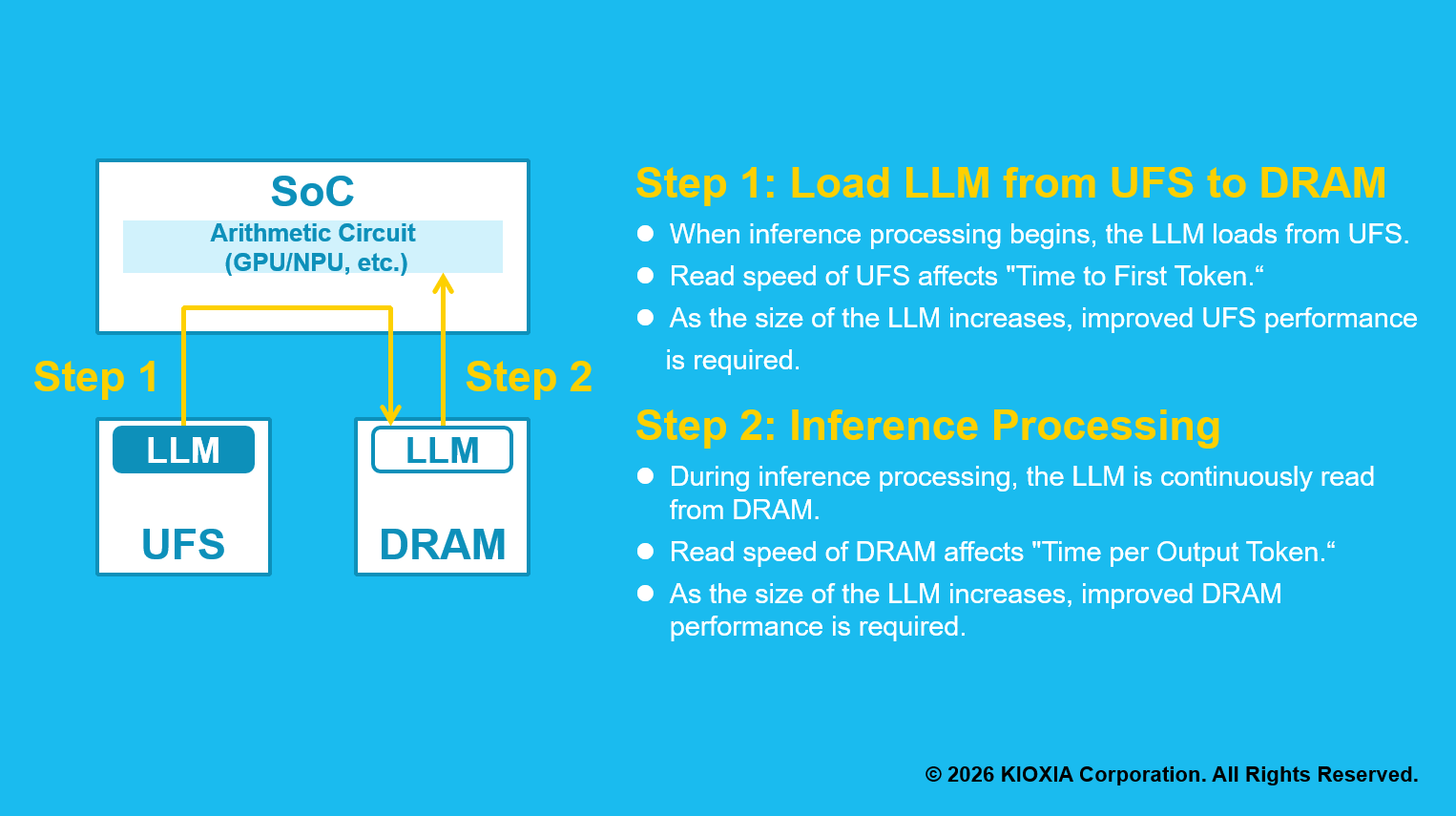

When using generative AI on a smartphone, the LLM stored in UFS is first loaded into DRAM, which is the system memory. Subsequently, the system on a chip (SoC) reads the LLM parameters from DRAM and performs computational processing (inference).

The problem is that in recent years, the number of LLM parameters has tended to increase in order to make generative AI smarter. The number of parameters in LLMs installed in on-device AI is estimated to be around 3 to 4 billion. When quantized to 8-bit (INT8), this equates to a capacity of around 3 to 4 GB 2. “While UFS 4.0/4.1 transfer speeds can handle this scale in under a second, as LLM sizes grow larger, load times increase, and so does the time it takes for users to receive their first response (time to first token),” says Watanabe. If larger LLMs are to be implemented to make generative AI smarter, higher transfer speeds become essential for UFS.

With UFS 5.0 transfer speeds reaching up to 10.8 GB/s, large LLMs can be loaded quickly, even with an increased number of parameters. This directly improves the usability of on-device AI. “While UFS 4.0/4.1 can handle LLMs around 3 to 4 GB in size, UFS 5.0 can support models up to about 10 GB,” says Watanabe.

According to Watanabe, KIOXIA’s UFS technology has three key strengths. The first is the flash memory itself. The 3D flash memory used in KIOXIA’s UFS 5.0 is the latest BiCS FLASH generation 8 technology 3, which employs the innovative CMOS directly bonded to array (CBA) technology for precisely bonding two wafers together. In CBA technology, the CMOS circuitry controlling memory cells and the memory cell array are manufactured on separate wafers, and then skillfully bonded together. Since the CMOS circuit and memory cells can now be manufactured using processes optimized for each, it enables significant improvements in flash memory performance, power efficiency, and bit density.

The second strength is the flash memory controller technology developed in-house by KIOXIA. UFS 5.0 uses the MIPI Alliance standard M-PHY version 6.0 for the physical layer and UniPro version 3.0 for the protocol. KIOXIA was involved in the formulation of these standards from the early stages and has been developing a high-speed interface for UFS 5.0 for some time. Furthermore, by optimizing the controller’s power supply design, KIOXIA has achieved both high performance and low power consumption for mobile devices where battery operation is essential. The third strength is error correction code (ECC) technology. The powerful ECC enables the maximum performance of flash memory.

Utilizing RAG on your smartphone

In addition to making it possible to accommodate larger LLMs, the fast transfer speeds of UFS 5.0 open up the possibility of storing retrieval-augmented generation (RAG) databases. RAG is a technology for improving the accuracy of generative AI responses by combining LLMs with external information, such as corporate databases.

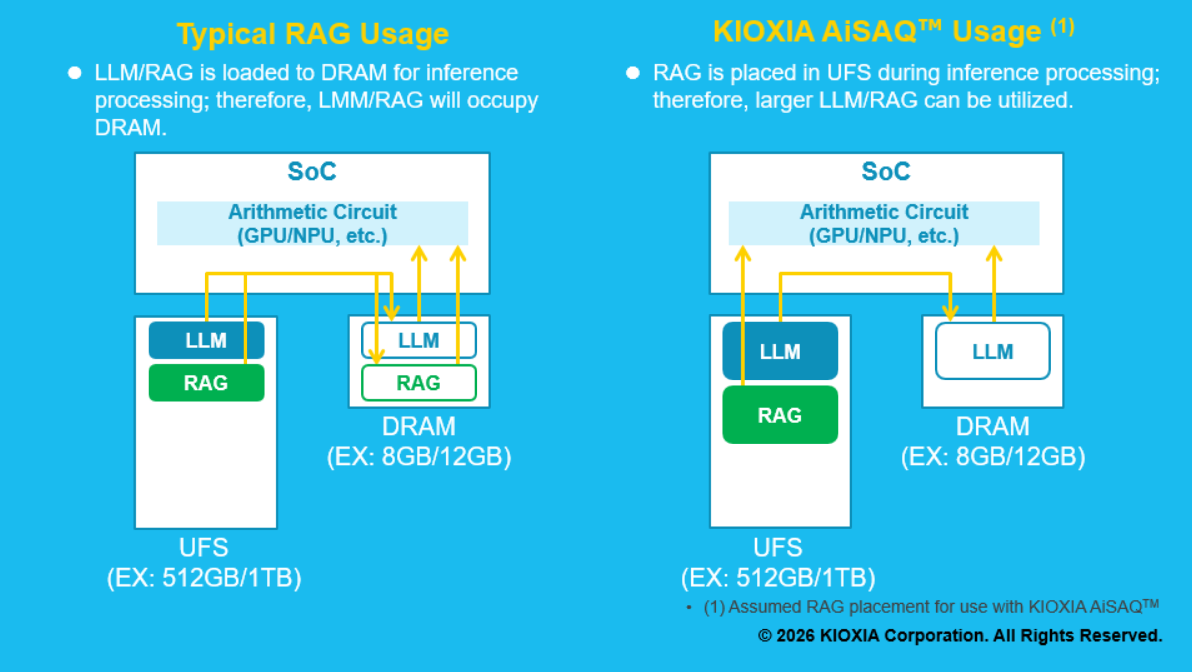

KIOXIA has released its open-source data search software, KIOXIA AiSAQ3, for the use of RAG in data centers. KIOXIA AiSAQ allows data to be searched while the RAG database remains stored on an SSD, eliminating the need to consume DRAM. KIOXIA is also considering applying KIOXIA AiSAQ to smartphones and has completed the technical verification stage. This would allow the RAG database to be stored on UFS rather than DRAM, making it possible to increase the capacity of the RAG database.

With increases in DRAM capacity being limited by cost concerns, storing the LLM and RAG databases on high-capacity UFS can potentially improve the user experience of on-device AI.

Optimizing the entire AI system by separating AI’s reasoning capabilities from its knowledge

UFS 5.0 will bring new optimizations to on-device AI system configurations and has the potential to change the role of storage in smartphones. This is the opinion of Jun Deguchi, Group Manager of the AI & System Research Center at KIOXIA’s Frontier Technology R&D Institute.

As previously explained, one way to make AI smarter is to increase the scale of the LLM. Increasing the number of LLM parameters to store knowledge raises the computational load, which requires improving the GPU’s computing performance. Deguchi describes this as reproducing knowledge through calculations.

“Currently, both AI’s reasoning power (inferential performance) and knowledge (data) are expressed through calculations. However, this upward growth trajectory will eventually reach its limit, both in terms of cost and power consumption,” says Deguchi. Wouldn’t it then make sense to separate AI’s reasoning ability from its knowledge? KIOXIA has been considering this option for years and has been conducting research and development in both flash memory and software to achieve this separation, even before generative AI became widespread. KIOXIA AiSAQ is one key result of such efforts.

Ultimately, if reasoning ability and knowledge can be separated, the GPU can be used only for reasoning (inference), while flash memory can handle the knowledge storage portion. This is a natural approach that leverages each device’s inherent strengths. Since the computational load is reduced, it leads to a reduction in GPU power consumption, and it also expands options such as adopting low-power AI accelerators. “By deploying semiconductor devices where they perform best, we can optimize the entire AI system in a fundamentally new way,” says Deguchi.

Towards the future when memory shapes AI’s individuality

In an AI world where data becomes knowledge, the role of flash memory and UFS will also change. “Until now, they were seen merely as entities for pooling data for AI training,” says Deguchi. However, KIOXIA believes they will now take on the role of shaping AI’s individuality.

“Human individuality is shaped by memories and experiences. Going forward, even AI systems based on the same LLMs will likely become more personalized and distinctive as external memories and experiences, such as RAG databases, are added. Flash memory is precisely what will carry the memories and experiences that form AI’s individuality, and we are the ones developing that technology. This is directly connected to our mission of “uplifting the world with ‘memory’,” says Deguchi.

UFS 5.0 will further enhance the use of LLM and RAG databases thanks to high-speed data transfer. KIOXIA’s UFS 5.0 products, incorporating the company’s cutting-edge technology, will play a vital role in supporting evolving AI systems and making on-device AI smarter and more personalized.

Notes

- The information described in this article is accurate at the time of interview.

- 1 GB/s is calculated as 1,000,000,000bytes/s, and 1MB/s is calculated as 1,000,000bytes/s. This is a theoretical value calculated from the interface speed and the speed of a user’s device is not guaranteed. The read/write speed may vary depending on the host system, read/write conditions, file size, etc.

- 1 GB is calculated as 1,073,741,824bytes.

- “BiCS FLASH” and “KIOXIA AiSAQ” are trademarks of KIOXIA Corporation.

Please also check

High-density 3D flash memory using high-precision wafer bonding brings new value to storage

In recent years, flash memory manufacturers have focused primarily on developing technologies to increase the number of layers of memory cells and increase memory density. Each time a new generation of flash memory is released, the number of layers increases with some products boasting more than 200 layers. However, as Atsushi Inoue, Vice President of Memory Division at KIOXIA, explained, "Increasing the layers of memory cells is only one way of increasing capacity and memory density, and we are not exclusively preoccupied with the number of layers."